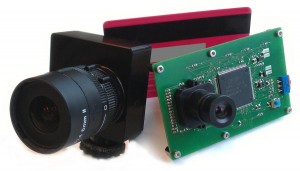

DVS and DAVIS – Dynamic Vision SensorsDynamic vision sensors (DVS) are a completely new way of doing machine vision. Full information about DVS can be found at the spin-off company iniVation. |

|

DAS – Dynamic Audio SensorsDynamic audio sensors model the cochlea in sending asynchronous spike-encoded representation of auditory activity. |

|

DYNAP – Dynamic Neuromorphic Asynchronous ProcessorMixed-signal spike-based neuromorphic processor for general-purpose computation and processing of signals from the DVS/DAVIS and DAS. Full information about DYNAP can be found at the spin-off company SynSense. |

Legacy and Discontinued Products

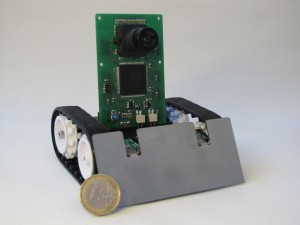

PushbotThe pushbot is a robotic platform with embedded DVS, for research into event-based sensory-motor systems. |

|

Physiologist’s FriendThe Physiologist’s Friend Chip electronically emulates cells in the visual system and responds realistically to visual stimuli. Researchers and teachers can use this chip as a simple substitute animal for training students, developing new techniques, or testing experimental setups before using an animal. |

|